Trillion Cell Grand Challenge blog #8 was written by Dr. Scott Imlay, Chief Technical Officer.

Craig Mackey, Senior Research Engineer, created the iso-surface.

We choose to go to the moon in this decade and do the other things, not because they are easy, but because they are hard, because that goal will serve to organize and measure the best of our energies and skills, because that challenge is one that we are willing to accept, one we are unwilling to postpone, and one which we intend to win…

—John F. Kennedy

Iso-Surface Created from One Trillion Cells!

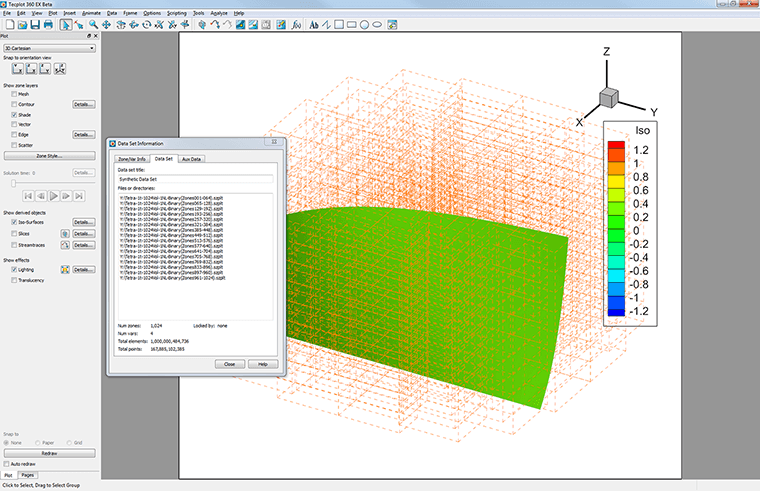

Today, an iso-surface was created in Tecplot 360 from a data set containing one trillion tetrahedral finite-element cells, thus completing the first milestone in the Trillion Cell Grand Challenge. This was no “moon shot”, but it was hard, and it did “serve to organize and measure the best of our energies and skills”. It also forced us to overcome the limits and bottlenecks that would eventually catch up to our software as data set sizes grow over the next 15 years. I’ll talk about some of those changes but, first, let me remind you what the Trillion Cell Grand Challenge entails.

Figure 1. “As promised, here is our first iso-surface of one trillion cells. The data set is 8.5 TB (that’s terabytes) spread across 16 files. It took 120 GB of memory and about 45 minutes to render. The iso-surface is 190 million cells. The orange lines are the bounding boxes of the 1024 zones that make up the data.” – Craig Mackey, Senior Research Engineer at Tecplot, Inc.

Figure 2. My humble desktop computer.

The Challenge

By the end of 2015, Tecplot will visualize a finite-element data set containing one trillion tetrahedral cells, using slices, iso-surface, and streamtraces. Furthermore, Tecplot intends to do this visualization using an engineering workstation like the one in figure 2.

This is actually a picture of my work computer, a Dell Precision T7610 with dual 8-core Intel Xeon processors, an NVIDIA Quadro K4000 video card, 128GB of memory, and a 16TB Raid5 external hard-disk array. This system is probably a little more advanced than what is sitting beside your desk, but systems with these capabilities will be common-place in the near future. All told, this computer system costs less than $10,000.

Why One Trillion Cells?

Why a trillion cells? As mentioned in the second blog of this series, the NASA 2030 CFD Vision report predicts that large CFD data sets will have a trillion cells per time step in the year 2030.

To be fair, others have visualized a trillion cells on large-scale HPC systems. Their work was significant, but not nearly as difficult as doing in on an engineering workstation. We choose the workstation because it forced us to be efficient and, frankly, the majority of our customers visualize their CFD results on their engineering workstations.

This challenge forced several improvements to Tecplot 360. First, previous versions of Tecplot have limited the size of a zone to 2 billion cells. Until recently, that didn’t seem like much of an issue.

However, when you start talking about a trillion cells per time step, 2 billion cells per zone seems very limiting. We’ve now modified Tecplot to use 64-bit offsets so that zones are now limited to nine quintillion (9 x 10^18) cells. It will take many decades before that limit becomes an issue.

Other improvements to Tecplot 360 are currently in development. In particular, for data sets approaching a trillion cells, secondary arrays lead to a more linear scaling of memory usage with size.

Modifications to certain algorithms used in Tecplot 360 will allow the desired sub-linear scaling to continue well beyond a trillion cells! When these changes are complete, I will do a follow-on blog with the new results.

Blogs in the Trillion Cell Grand Challenge Series

Blog #1 The Trillion Cell Grand Challenge

Blog #2 Why One Trillion Cells?

Blog #3 What Obstacles Stand Between Us and One Trillion Cells?

Blog #4 Intelligently Defeating the I/O Bottleneck

Blog #5 Scaling to 300 Billion Cells – Results To Date

Blog #6 SZL Data Analysis—Making It Scale Sub-linearly

Blog #7 Serendipitous Side Effect of SZL Technology