Performance Testing with PLOT3D data

At Tecplot we know your time is important to you and that you have choices when it comes to post-processing. So we’ve done some (more) testing to help you decide which post-processor will perform the best with your data. Of course, performance is dependent on several factors – and which data type you are using is an important one. For today’s post we’ll be diving into performance with PLOT3D data.

One of the primary producers of PLOT3D data is NASA’s Overflow code, which is the code that produced the data we tested with in this post.

Experiment

For this test we used 46 timesteps in a transient simulation of a wind turbine. These total 118 Gb on disk and 2.18 trillion elements. The final timestep alone is 20.9 Gb (grid and solution), composed of 5863 zones, and has a total of 263 million elements.

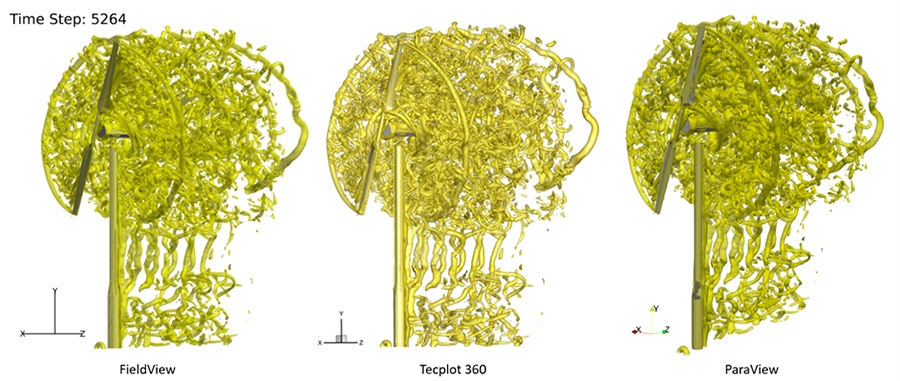

In this experiment we load the data, compute Q-Criterion, draw an isosurface at Q=0.001, and export the image. We repeat this for each grid/solution pair in the time series and capture the execution time and the maximum RAM consumed.

It’s important to note that we set the Tecplot 360 Load-On-Demand setting to “Minimize Memory Use.” The 360 default setting allows up to 70% consumption of available RAM before unloading the data from RAM. Retaining data in RAM improves performance when moving back and forth between timesteps – but for batch operations this is unnecessary.

Figure 1: Images of the wind turbine results for FieldView, 360, and ParaView (PLOT3D data)

Setup

We conducted our experiments on a Windows 10 machine with 32 logical cores, 128 Gb RAM, and a NVIDIA Quadro K4000 graphics card. The data was stored locally on a spinning hard drive to avoid any slowdowns due to network traffic.

The machine was accessed via Remote Desktop and all tests were run unattended in batch mode using:

- PyTecplot for Tecplot 360 2022 R1

- FVX script in batch with FieldView 21

- pvbatch.exe for ParaView 5.10

We used the Python memory-profiler utility to capture timing and RAM information.

The Windows utility RamMap.exe was used to clear the disk cache before each run. Clearing the disk cache ensured all tests were fair between the post-processors. This also more closely simulates an end-user experience when opening their simulation results for the first time after the simulation is complete. We then ran each test three times, clearing the disk cache each time. Timing results are an average of the three runs.

Summary Results

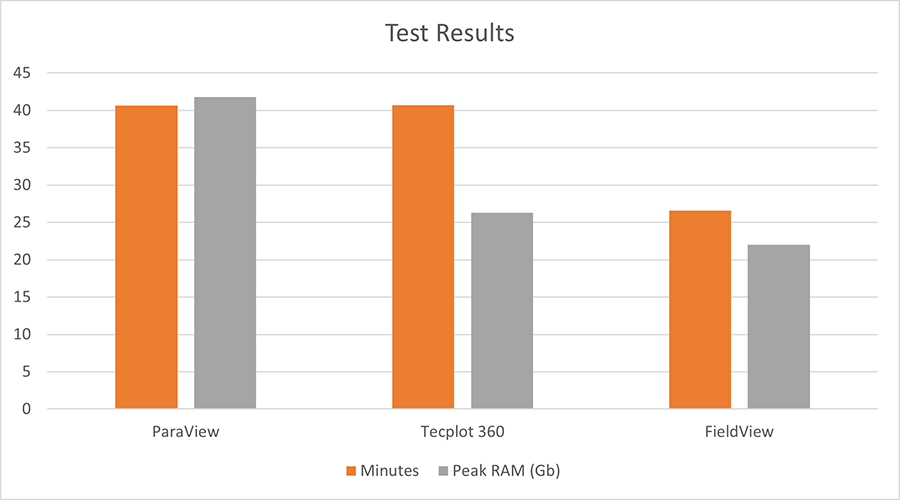

FieldView 21 proved to be the fastest post-processor in our test: 53% faster than both ParaView and Tecplot 360.

Figure 2: FieldView 21 proves to be the fastest and most RAM efficient post-processor for this test

When comparing peak RAM consumption by each post-processor, we found that FieldView also used the least amount of RAM at 22 Gb. 360, while no faster (nor slower) than ParaView, used 37% less RAM than ParaView, at 26 Gb. ParaView proved to be the least RAM efficient, consuming 42 Gb peak RAM in this test.

We should note that the peak RAM for all post-processors occurred at the final time-step, since that was by far the largest pair of data files in this simulation.

Conclusion

If you’re an Overflow user and want the fastest and most memory-efficient post-processor, you should use FieldView. If you’re a 360 user, you’re still getting a fairly memory efficient post-processor.

Thankfully, academics don’t need to choose between 360 and FieldView – you get access to both in the Tecplot Academic Suite.

Appendix

Here we dive into the RAM profile over time for each of the post-processors in this test. The plots presented below were produced using the Python memory-profiler module. This module tracks both the execution time and RAM consumption of a process.

Each of the plots below illustrate how the post-processors load data into RAM and subsequently discard data from RAM when it’s no longer needed.

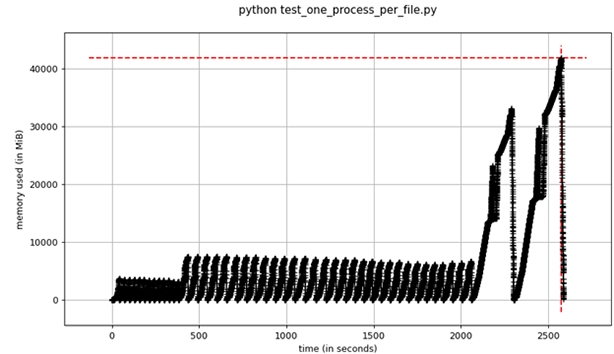

ParaView

In this result, we see that ParaView took ~2505 seconds and used a peak of ~42 Gb RAM

Figure 3: RAM vs. Time for ParaView 5.10 (via pvbatch.exe)

It’s important to note that we started a separate ‘pvbatch.exe’ instance for each grid/solution pair. We did this because ParaView does not easily handle PLOT3D files in which the grid varies over time. With 360 and FieldView we were able to use a single application instance for the entire time series. As you can see in this plot, the RAM consumption of pvbatch.exe goes to zero between each grid/solution file pair, since the executable exits and then restarts for the next timestep. pvbatch.exe utilizes multi-threading for most computations.

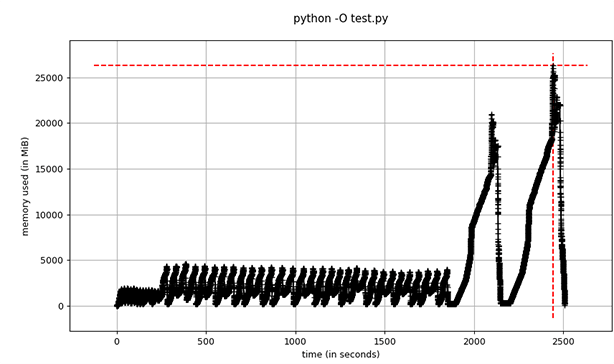

Tecplot 360

This plot shows that 360 took ~2500 seconds and used a peak of ~26 Gb RAM. Recall that 360 was run using a single process to load each grid/solution file pair. You can see how 360 unloads the data that it no longer needs as we advance through the timesteps. Also recall that the default setting in 360 is to retain data in RAM up to the 70% of RAM threshold – but for our test we used the Minimize Memory Use strategy to observe the true RAM requirements. For batch operations we suggest end-users use this setting.

Figure 4: RAM vs. Time for Tecplot 360 2022 R1 (via PyTecplot)

FieldView

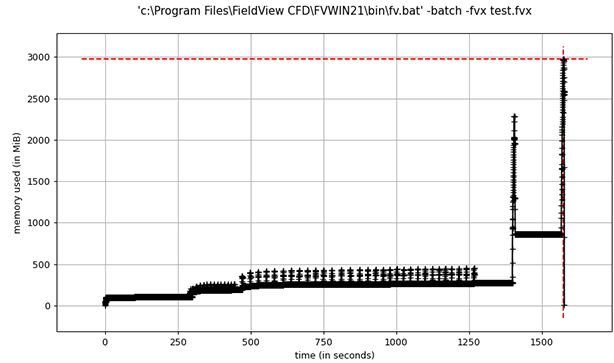

It’s important to note that the plot below does not represent the true RAM profile for FieldView. We ran FieldView MPI-parallel, which spawns additional processes. We used Python memory-profiler with the –include-children command line option, which is supposed to track all child processes, but we found that this did not yield correct results. Due to this, we visually monitored Windows Task Manager and recorded the peak observed RAM.

FieldView took ~1575 seconds. The RAM observed here is from the controller process, not the worker processes. Actual observed peak RAM was ~22 Gb (only ~3 Gb RAM was recorded by memory-profiler)

Figure 5: RAM vs. Time for FieldView 21 (RAM is from the controller process only)